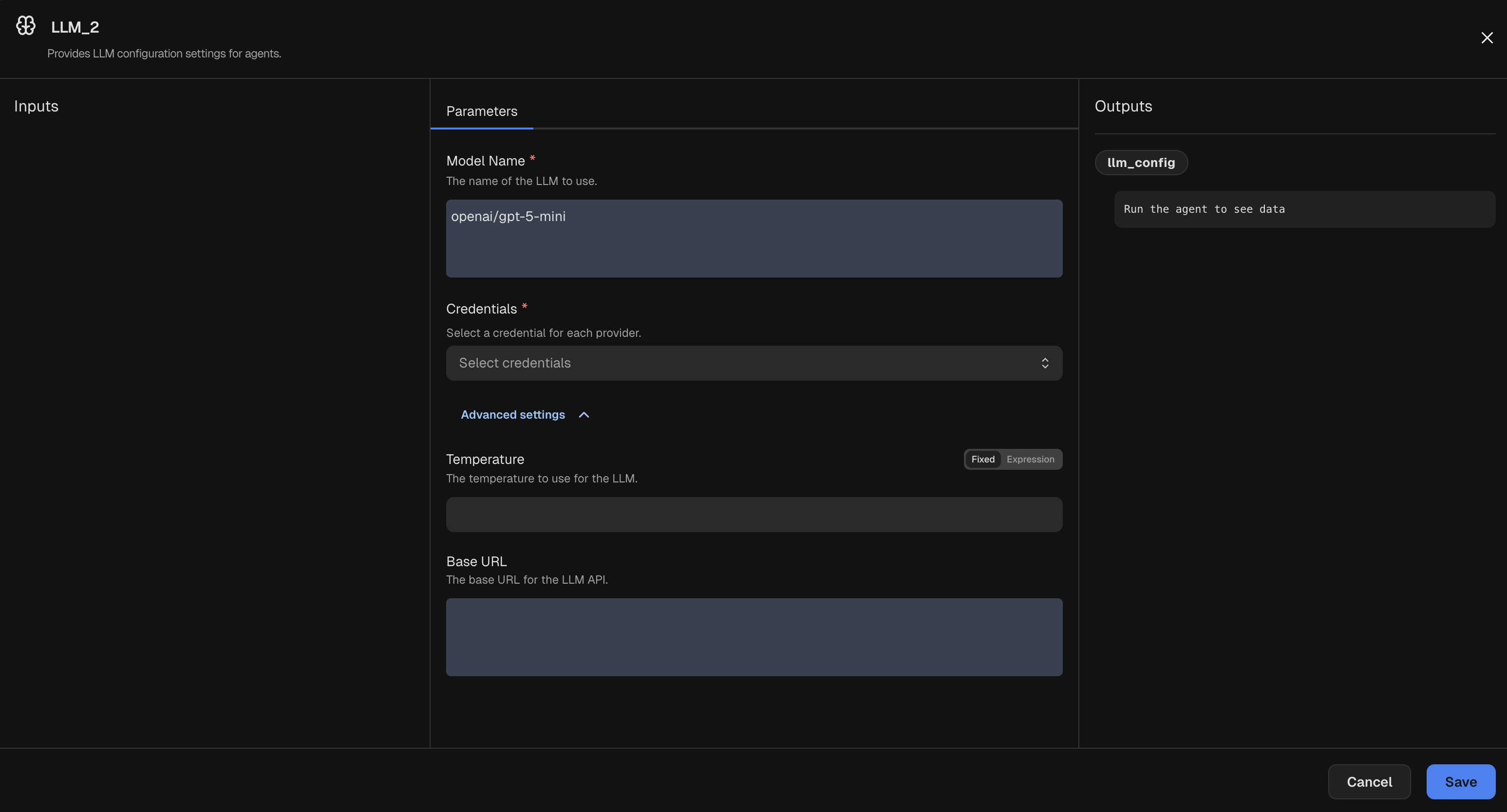

Parameters

The model identifier to use. Must start with the provider name, e.g.,

openai/, anthropic/, etc, unless using a custom base URL.Controls randomness for the model.

Custom base URL for the model provider.

Provider-specific API version to send with the request (for example, Azure OpenAI).

Sets the model’s reasoning effort when supported. Valid values:

none, minimal, low, medium, high, xhigh, default.Extra keyword arguments passed directly to the underlying model provider.

Credentials

Select an LLM Credential for the provider used by

Model Name (for example, OpenAI, Anthropic, Gemini, or xAI).Inputs

This node has no inputs.Outputs

| Field | Description |

|---|---|

model_name | Model identifier used by the provider. |

temperature | Temperature value applied to the request. |

base_url | Custom base URL, if supplied. |

api_key | API key sourced from the selected credential. |

api_version | API version passed to the provider, if supplied. |

reasoning_effort | Reasoning effort level sent to the provider, if supported. |

organization | Organization or account identifier from the credential, if set. |

extra_args | Arbitrary provider-specific arguments, if provided. |